What the BERT Update Means to my SEO Strategy

Google’s algorithms are how the search engine make sense of the millions of pages online. They’re the mechanism by which Google works out the subject and meaning of each page. In turn, the search engine can then match the right pages to the correct search queries. That’s the basics of how you see relevant results when you type a search into the Google home page.

Book a Consultation

Book a Consultation

SEO is all about positioning your pages as high in Google’s rankings as possible for as many relevant queries as you can. It takes a lot of time, effort, and often expense to get it right. The best first step toward effective SEO is to understand as much as you can about Google’s algorithms. When the search engine updates those algorithms, it’s crucial to be across it.

At the end of October 2019, Google rolled out one of the most significant updates in recent years. Called the BERT update, it’s a change to the search engine algorithms that it’s vital to understand. Our guide will tell you everything you need to know. As you read on, you’ll learn:

- What the BERT update is

- How it will affect search

- What the update means for Google users, SEO pros and site owners

- How you can optimise your SEO strategy for the BERT update

What is the BERT Update?

The rollout of the BERT update got announced in a blog post that went live on October 25th, 2019. In that post, Pandu Nayak, Google Fellow and Vice President of search, revealed that the update began rolling out the previous week.

That initial rollout applied to English language queries, including featured snippets. Google’s ultimate plan is for the BERT update to apply to all languages for which they offer search. That’s as per a Tweet from Google search liaison, Danny Sullivan. Sullivan also explained that there isn’t yet a set timeframe for rolling the update out to all languages.

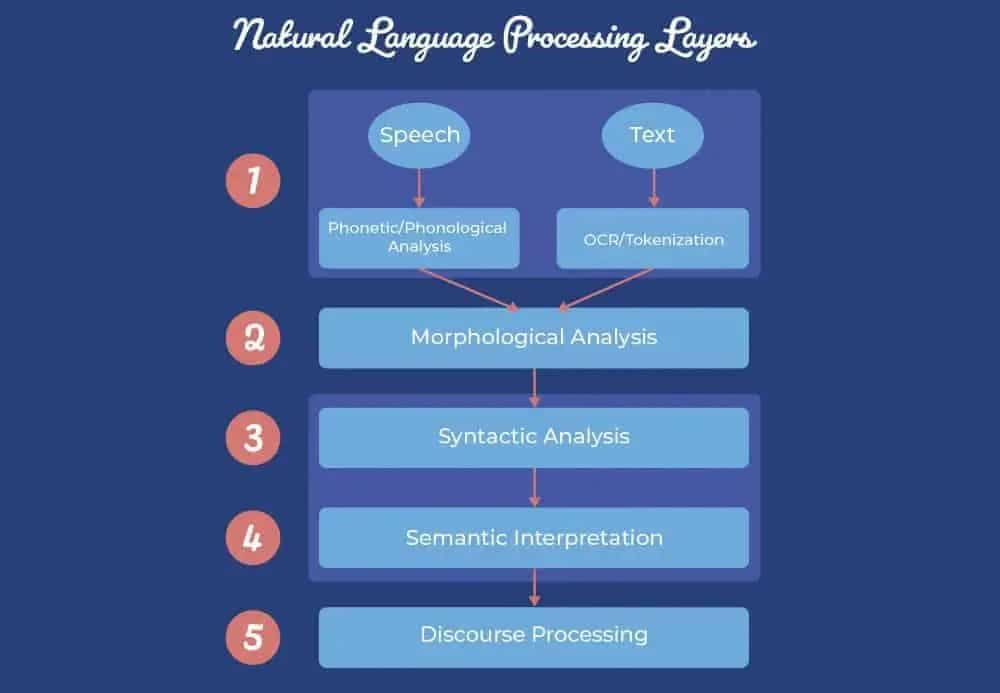

BERT stands for Bidirectional Encoder Representations from Transformers. So, it’s little wonder Google went with the acronym. BERT is a neural network-based technique for natural language processing (NLP). In case that sounds like gobbledygook to you – don’t worry, you’re not alone – let’s break down what that’s all about.

Neural Networks & NLP

Neural networks are collections of algorithms. They’re systems that can be ‘trained’ to recognise patterns in data. You train neural networks through a process called machine learning. The networks get shown vast swathes of data and learn to ID patterns within it. The networks then ‘learn’ to apply their pattern recognition skills to new data sets. The entire process is only possible via the application of massive levels of processing power.

Neural networks can make sense of varied kinds of data. That data can be the pixels of thousands of images or the many numbers that make up a set of financial accounts. Google used the entire plain-text corpus of Wikipedia to train the network associated with the BERT update.

NLP is a process made possible by the artificial intelligence (AI) of neural networks. The networks get trained on textual data. The aim is to allow them to understand language on a more human level. How we use language, after all, is more complicated than standard computers or machines can grasp.

NLP, and similar tech-based attempts to analyse language, are not new to the search and SEO field. Google’s BERT, though, represents a significant step forward in NLP. That’s thanks to the technique’s use of specific models called transformers.

Transformers & Bi-Directional Context

To grasp the importance of transformers, it’s worth first thinking about online search more generally. Nayak did a good job of exactly that in his post announcing the BERT update. He explained search as follows:

“At its core, search is about understanding language. It’s our job to figure out what you’re searching for and surface helpful information from the web, no matter how you spell or combine the words in your query.”

Figuring out precisely what searchers are looking for is the holy grail for Google. The search engine has made significant leaps in that regard over the years. Its ability to grasp the meaning of complex or conversational queries, though, has often left a bit to be desired.

That’s why many searchers often don’t use such queries. You yourself have probably typed a string of keywords into Google many times. It’s something that we all naturally know works better. How we’d typically ask a question to a human, isn’t how we’d communicate it to Google. The BERT update is looking to change that.

The ‘T’ in BERT stands for transformers. In this context, transformers are a particular type of neural network architecture. They can process the individual words in any query in relation to all other words in the query. Transformers can discern the exact meaning of any word according to the context in which it’s used.

The best way to explain this is with an elementary example. Consider the following sentences:

- The worker boxed up all the products ready for shipping.

- The fighters boxed for the full twelve rounds before the bout got decided on a split-decision.

A human reading those sentences understands instantly that ‘boxed’ has a different meaning. Thanks to transformers, a search algorithm can come to the same conclusion. It’s now able to consider the whole context of the sentences as we would. That’s a significant step forward in NLP, in anyone’s book.

As impressive as all of that sounds, what’s it got to do with search and SEO? That’s the critical question.

How Will BERT Change Search?

The BERT update is going to impact a whole lot of search results. Google plans to apply BERT to both rankings and featured snippets in search. Taking ranking results alone, BERT will help Google better understand 10% of all searches in the US in English. The number of searches impacted will then increase further as the update extends to other languages and locations.

Queries most likely to get affected by the update are long, more conversational ones. These are the queries that Google algorithms have had the most trouble understanding. The search engine’s improved grasp of such search terms will impact both users and SEOs. Let’s get into a few details about how BERT will make a difference to those two, diverse groups.

Improvements for the User

The main impact of the BERT update will be on people performing Google searches. The greater contextual understanding of queries will allow Google to deliver far more relevant results. The search engine’s post announcing the BERT update worked through some examples of how it works in practice. It’s worth dwelling upon a couple of those examples here.

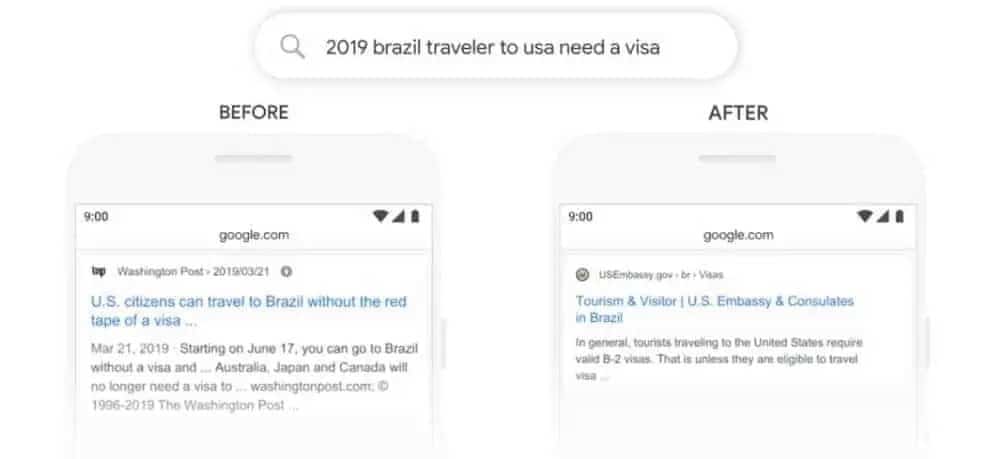

First, the post presented the search query “2019 brazil traveler to usa need a visa”:

In that particular query, one of the most critical words was also one of the shortest. ‘To’, and its relation to the other words, define the information the searcher is looking for. They are planning a trip from Brazil TO the USA and need to get a visa.

Previously, Google wouldn’t have appreciated the importance of the ‘to’. As the image above shows, the search engine used to show results about travelling to Brazil. Thanks to the BERT update, those irrelevant results no longer feature on the SERP.

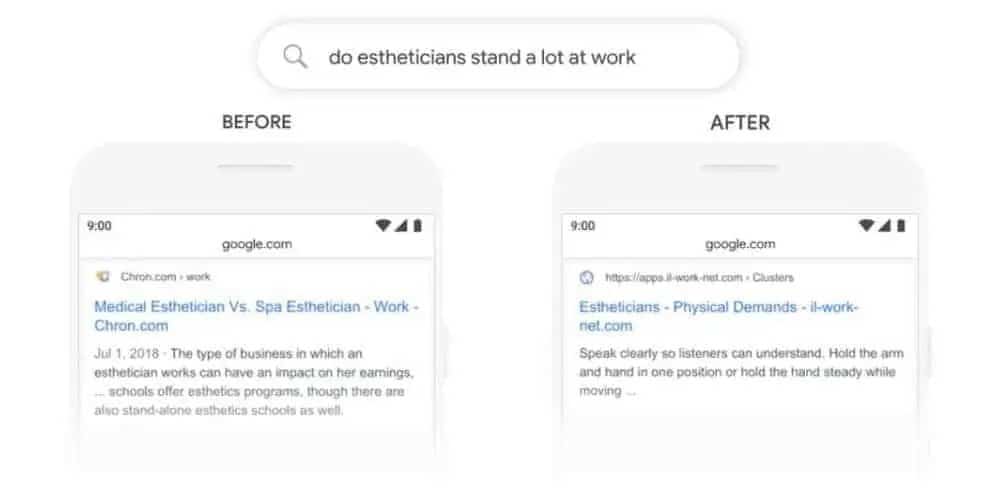

A further query shared by Google’s post illuminated things further. This time, they used “do estheticians stand a lot at work”:

In this example, the BERT update’s improved grasp of whether terms are synonymous or not comes to the fore. For searches prior to the BERT update, Google was taking ‘stand’ in the query to mean the same as ‘stand-alone’.

As such, results regarding ‘stand-alone esthetician schools’ were ranking highly. After the update, noticeable corrections are in evidence on the SERP. The first result instead relates to estheticians standing up at work. That, in turn, reflects the actual meaning of the search query.

The BERT update, then, is set to make a real difference to everyone who uses Google to search. As a result, that will have implications for SEO, but perhaps not as many as you might think.

Implications for SEOs

At its heart, SEO is about ensuring your pages rank as highly as possible for relevant search queries. Any update that impacts how Google compiles its rankings will have an effect on your SEO. If you’ve been doing SEO correctly up to now, though, the effect won’t be a significant one.

The BERT update doesn’t introduce a new ranking factor. It isn’t an update that rewards a different kind of content or different page elements. Instead, BERT seeks to improve how Google itself performs. If a searcher asks a question pertinent to your content, BERT should make it easier for them to find your pages.

How can I optimise my SEO Strategy for BERT?

The BERT update isn’t a change to Google’s algorithms that should need any new SEO optimisation efforts. In fact, you can argue that this update makes your SEO and content marketing life far more straightforward. That’s as long as user intent and creating content for users – not Google – is already at the heart of your marketing strategy.

Google Search Liaison, Danny Sullivan, said as much on Twitter:

Google has long highlighted the importance of creating content for actual users. The search engine doesn’t want anyone to fill their sites with copy ‘optimised’ only for rankings. The best websites, then, will already be writing posts and pages with user intent in mind.

There is obviously a degree of compromise, though, that you must make when it comes to SEO. Keyword research tells you those queries that your content must target. Due to the nature of online searches, those keywords are often not real, conversational phrases. More often, they’re the kind of keyword-ese strings of words we talked about earlier.

The BERT update could change all that. If Google can better understand real phrases, searchers will be able to use them more often. Site owners and SEOs, as a result, will then be able to target those phrases in their copy. No longer will you have to crowbar an awkward SEO keyword into your content to help your SERP rankings. That’s what we mean when we say that BERT might make your life easier, from an SEO standpoint.

The only kind of SEO efforts that BERT may negatively impact are those that can already get you into hot water. Paying for link placement in existing content, for instance, will become even more of a no-no.

The unnatural placement of SEO keywords sticks out like a sore thumb, anyway. After the BERT update, Google won’t point users to content that’s not relevant to the real meaning of their queries. That’s regardless of whether the content includes a particular keyword or not.

Thanks to the BERT update, you can focus on user intent even more. BERT and machine learning more generally are taking online search in a new direction. There’s a logical progression toward results reflecting user intent. That’s as opposed to results focussed on exact keywords or queries.

Google wants each SERP to show results that are genuinely relevant to what the searcher is looking for. That’s not the same as ensuring that all results include the exact words a user typed into the search engine. The BERT update allows Google to make a better distinction between the two. It’s for that reason that BERT may be a game-changer.

BERT; The New Name in Search Queries

Bidirectional Encoder Representations from Transformers sounds pretty intimidating. BERT sounds friendly and exciting. The acronym is far more apt. The BERT update is a tweak to Google’s algorithms that could spell good news all round.

The BERT update dramatically improves Google’s ability to understand the true meaning of search queries. The search engine has leveraged machine learning to make its algorithms more intelligent. They’re now able to discern the context in which different words get used. That makes it easier for Google to deliver results that genuinely answer users’ questions.

For SEOs and site owners, too, that’s good news. The BERT update may spell the beginning of the end of trying to wedge clunky keywords into copy. Instead, you can focus on writing the very best content for those people who are actually going to read it. That’s got to come as a relief to writers, readers, and SEO pros everywhere.

- About the Author

- Latest Posts

Nick Brown is the founder & CEO of accelerate agency, a SaaS SEO agency. Nick has launched several successful online businesses, writes for Forbes, published a book and has grown accelerate from a UK agency to a company that now operates across US, APAC and EMEA and employs 160 people. He was also once charged at by a mountain gorilla